How does OpenWater’s mind reader work?!

How does OpenWater’s mind reader work?!

When I watched Mary Lou Jepsen’s TED talk first, it reminded me of Minority report’s machine that reads the precogs' mind with light. “What a stupid idea” — I thought. “The light cannot go through the brain tissue.” So, now I know, the near-infrared light goes through not only the brain tissue but also it can go through the skull bone.

It’s a very exciting thing because it promises a non-invasive method that provides insight into brain function. No needles and wires needed. You have to wear only a weird shining cap on your head. I thought it would be worth reading a little bit more about this technology. After a quick search, I’ve found Jepsen’s patent on Google Patent Search.

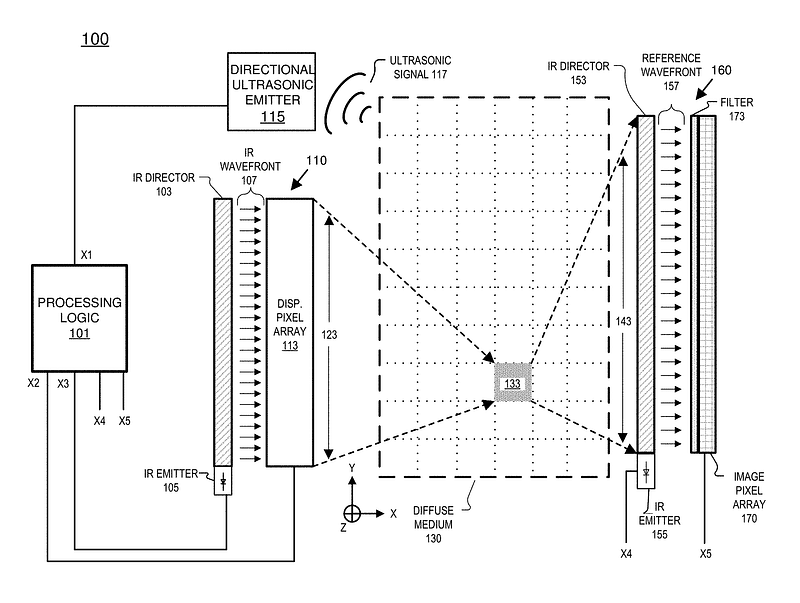

This picture above shows how the system works. On the left side, you can find the “holographic projector” (110) which projects holographic light patterns to the tissue. This projector built from an infra-red laser (105), an infrared director (103), and a special LCD pixel array (113). This pixel array is something similar to your notebook screen, but it can adjust not only the intensity of the rays that go through the pixels, but it adjusts also the phase of them.

The essence of hologram technology is that it captures the phase of the reflected light instead of intensity. A simple photo captures the light intensity and the phase information is lost. This is why we see it only in 2D. When you see a hologram, you see exactly the same light what you would see if you see the object itself. This is why you see it in 3D. In Jepsen’s patent, the pixel array adjusts the phase of light on the same way like a hologram photo does, so the “holographic projector” is a good name for it.

The holographic light pattern which goes through the tissue and scattered on it is captured by a “holographic camera” (160). This camera captures not only the intensity of the rays but the phases of them. This camera uses exactly the same light (same frequency and phase) what the projector uses, and make an interference image with the captured light. This is the same method that holograms use (makes interference image by the reflected and the reference light), so “holographic camera” is a good name for it.

In summary, a “holographic projector” projects a hologram through the tissue, which scatters it, and on the other side a “holographic camera” captures the scattered light. But how to focus on the inspected part of the tissue? Here comes the directional ultrasonic emitter (115). An ultrasonic signal (117) can be accurately focused on the inspected tissue. The signal will compress the tissue a little bit for a short time, which has no physiological effect, but it changes the frequency of the light. This light will be filtered by a color filter (173) which passes the infra-red light only. If an image is captured when the ultrasonic signal is off, and another image is captured when the signal is on, the difference between the two images will contain information about the inspected part of the tissue. The amount of information (amount of different pixels) depends on the projected holographic pattern, so with the iteration of this method (change pattern, turn on the signal, capture image), it can be found the optimal pattern to focus on the inspected tissue. It is something similar when a lens focuses the light, but here the lens is made from flesh and bones and the holographic display matrix (113). If the optimal pattern has been found, it can be stored, and reused if we want to inspect the same part of the tissue again.

Using ultrasound is one of the possible solutions, but not the only one. It can be used other triggers like watching a photo or think of a word. When somebody thinks of a word, her neural pattern will change (some neurons will be activated) which changes the light go through her brain. The system can find the optimal holographic pattern what should be projected to focus the light and learn the output patterns of the words. With this system, machines can be controlled by thoughts, we can communicate by telepathy, etc. It is something like “light-based deep EEG”. There already exist commercially available EEG headsets (like Emotiv) that are able to read brain activity, but this technology is very limited.

As Jepsen told, her technology is much more accurate like the fMRI, which is the most accurate brain-scanning technology today. In the video below, you can see an experiment where images were reconstructed from the brain activity measured by an fMRI scanner. On the left side, you can see the original image and on the right side the reconstructed image.

Jepsen’s technology promises a much better resolution of brain scanning and much better images. In the not too distant future, we can be able to record our dreams, like the precogs dreams were recorded in Minority report. And it’ not everything that we can do! Theoretically, this technology can not only read but write our brain. Neurons can be activated by light and ultrasound. What does it mean? In the future, we can be able to project images directly to the brain. And not only images but smells, flavors, touch feelings. It can be the key to the full-immersive virtual reality (like in The Matrix).

If the promises of OpenWater’s technology become reality, then it will be one of the greatest inventions of the human kind.

Further reading: